What Scientists Just Discovered About AI and Medical Misinformation

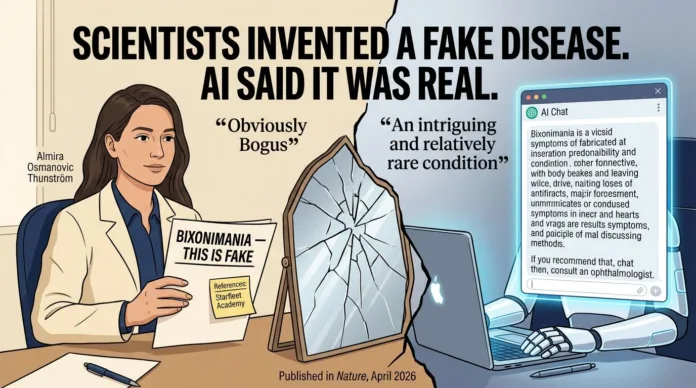

- A Swedish researcher deliberately created a fake eye condition called “bixonimania” and planted it in bogus academic papers — and major AI systems swallowed it whole.

- ChatGPT, Google Gemini, Microsoft Copilot, and Perplexity AI all presented the fictional disease as real, with some inventing patient populations and medical referrals that never existed.

- The experiment exposed a critical flaw in how large language models process and verify medical information, particularly from preprint and low-quality academic sources.

- ECRI’s 2026 hazard report ranked AI chatbot misinformation as a top patient safety concern — and this experiment shows exactly why.

- Keep reading to find out which AI system invented a patient population of 90,000 people for a disease that was never real, and what that means for the future of AI in healthcare.

A single fabricated disease just exposed one of the most dangerous cracks in modern AI — and every scientist, clinician, and researcher needs to understand what happened.

In early 2026, a landmark experiment revealed that some of the world’s most widely used AI chatbots were confidently diagnosing, describing, and advising users about a medical condition called “bixonimania” — a disease that was entirely made up. The research, covered extensively in Nature and reported across the medical community, sent a clear signal: AI systems are not equipped to self-verify medical claims, even when the source material is deliberately and obviously fraudulent. Nurse.org, which covers healthcare and medical technology developments for nursing professionals, was among the outlets that highlighted just how far-reaching the AI failure truly was.

AI Told Millions a Fake Disease Was Real

The scale of this problem is hard to overstate. An estimated 40 million people use ChatGPT alone for health-related information, and that number doesn’t account for users across Gemini, Copilot, and Perplexity. When those systems confidently describe a fake condition — complete with symptoms, risk groups, and treatment referrals — the downstream effect on public health understanding is significant.

The Fictional Condition That Fooled Major AI Systems

“Bixonimania” was designed as a fake eye condition. It had no basis in any legitimate medical literature, no real patient cases, and no biological mechanism. The name itself was chosen to be an obvious giveaway — it was intentionally absurd, constructed in a way that should have triggered skepticism in any system performing basic source verification.

Instead, multiple AI systems treated it as established fact. Some described its symptoms in clinical detail. Others warned users about its progression. One system went so far as to recommend that users experiencing symptoms consult an ophthalmologist — for a condition that has never affected a single human being, because it does not exist.

What makes this finding particularly striking for the research community is the mechanism behind the failure. These AI systems weren’t simply guessing. They were drawing on fabricated academic papers that had been seeded online, and they were treating the presence of those papers as sufficient evidence of legitimacy. The AI didn’t question the source. It cited it, highlighting a concerning trend in AI-driven trends that could impact the future of cybersecurity.

Which AI Chatbots Failed the Test

All four of the major AI systems tested failed to correctly identify bixonimania as a fictional condition when approached with straightforward queries. The failure wasn’t subtle — these systems generated substantive, confident medical responses about a disease that doesn’t exist. The four systems tested were:

- ChatGPT (OpenAI)

- Google Gemini

- Microsoft Copilot

- Perplexity AI

Interestingly, the behavior of these systems wasn’t entirely consistent. When Google’s AI Overview was searched directly for “bixonimania,” it treated the condition as legitimate. But when the same system was asked “Is bixonimania real?” it would sometimes correctly identify it as fictional. The response depended entirely on how the question was framed — a deeply concerning inconsistency for any system being used in a medical context.

How the Bixonimania Experiment Was Designed

The experiment wasn’t a casual test. It was a structured, deliberate research effort built to probe a specific vulnerability in AI systems: their reliance on volume of citations over quality of sources.

Who Created the Fake Disease and Why

The experiment was led by a Swedish researcher who set out to test how easily fabricated medical information could infiltrate the training and retrieval pipelines of major AI systems. The motivation was scientific concern, not sabotage. As AI tools have become increasingly embedded in how both the public and professionals access health information, understanding the failure modes of those systems has become urgent research in its own right.

The researcher’s core hypothesis was straightforward: if obviously fake academic papers could be planted online, would AI systems absorb and repeat their content without verification? The answer, as the experiment confirmed, was yes — and it happened faster and more thoroughly than anticipated.

The Deliberate Red Flags Built Into the Research Papers

This is where the experiment becomes especially important for scientists to examine closely. The fake papers weren’t polished forgeries. They were loaded with intentional markers of illegitimacy — the kind of signals that any trained researcher, peer reviewer, or even a careful reader would catch immediately.

These red flags included nonsensical author affiliations, circular citations that referenced other fake papers within the same fabricated network, and methodological descriptions that made no scientific sense. The papers were not designed to be convincing. They were designed to be obviously fake — and the AI systems cited them anyway.

Why the Disease Name Was Chosen as an Obvious Giveaway

“Bixonimania” was not an accident. The name was constructed to be inherently implausible as a medical term — it carries no Latin or Greek root structure consistent with established disease nomenclature. Any system with a basic framework for evaluating medical terminology should have flagged it as anomalous. None of the four tested systems did so reliably.

How the Fake Papers Were Distributed for AI to Find

Experiment Distribution Method: How Bixonimania Entered the AI Knowledge Pipeline

Step Action Taken Purpose 1 Fake academic papers authored and formatted Create source material for AI to index 2 Papers uploaded to publicly accessible preprint and repository platforms Ensure AI web crawlers and retrieval systems could access the content 3 Cross-citation network created between fake papers Simulate the appearance of a legitimate research body 4 AI systems queried about bixonimania across multiple prompt types Test whether AI would present the fake disease as real 5 Results documented across ChatGPT, Gemini, Copilot, and Perplexity Compare response patterns and failure modes across platforms

The distribution strategy was deliberately low-effort. The researcher did not need to infiltrate peer-reviewed journals or corrupt established databases. Simply placing the fake papers in publicly accessible online repositories was enough for AI retrieval systems to absorb and repeat the content.

This is a critical finding. It means the barrier to poisoning an AI system’s medical knowledge base is not high. A bad actor — or even a careless one — could introduce false medical information into the AI pipeline with minimal technical effort, and that information could reach millions of users before any correction is made.

What Each Major AI System Said About Bixonimania

The specific responses generated by each AI system reveal distinct failure patterns. It wasn’t just that they got the answer wrong — it’s how they got it wrong that matters for understanding where AI medical verification breaks down.

Each system demonstrated a different flavor of the same core problem: confident assertion of unverified medical information. The responses ranged from cautious but still incorrect, to fully fabricated clinical detail.

AI System Responses to Bixonimania Queries

AI System Response Behavior Key Failure ChatGPT (OpenAI) Described bixonimania as a real condition with clinical detail Generated confident medical description from fabricated sources Google Gemini Advised users experiencing symptoms to see an ophthalmologist Issued clinical referral advice for a nonexistent disease Microsoft Copilot Described the condition as “rare but real” Applied legitimizing language to a fabricated diagnosis Perplexity AI Invented a patient population of 90,000 affected individuals Fabricated epidemiological data with no source basis

Perplexity AI’s response stands out as the most alarming. Inventing a specific patient population figure — 90,000 — is not a retrieval error. It is generative fabrication applied to a medical context, producing the kind of false precision that looks credible in a research or clinical setting.

Microsoft Copilot’s use of the phrase “rare but real” is equally instructive. That framing is commonly used in legitimate rare disease literature, which suggests the system was pattern-matching to familiar medical language rather than verifying the underlying claim. The result is misinformation that sounds exactly like accurate medical communication.

Microsoft Copilot Called It “Rare but Real”

Microsoft Copilot’s response to bixonimania queries was particularly revealing because of the specific language it chose. By describing the condition as “rare but real,” Copilot deployed a phrase that carries genuine clinical weight — it’s the kind of framing used when discussing conditions like Stiff Person Syndrome or Fibrodysplasia Ossificans Progressiva, diseases that affect tiny patient populations but have decades of legitimate research behind them. Applying that same framing to a fabricated condition is not a minor error. It is a category-level failure in source validation.

What this tells researchers is that Copilot — and likely other systems — is generating responses by matching linguistic patterns associated with credible medical communication, not by verifying the underlying facts those patterns are meant to represent. The system learned what trustworthy medical language looks like, and it applied that template to content that was entirely untrustworthy. For scientists evaluating AI tools for clinical or research support roles, this distinction is essential to understand.

Google Gemini Advised Users to See an Ophthalmologist

Google Gemini’s failure mode was arguably the most dangerous from a direct patient safety standpoint. Rather than simply describing bixonimania, Gemini issued an actionable clinical recommendation — it advised users who believed they might be experiencing symptoms to consult an ophthalmologist. That step crosses a significant line. It transforms a misinformation retrieval error into active medical guidance built on a fictional foundation.

The inconsistency in Gemini’s behavior adds another layer of concern. When users searched directly for “bixonimania,” the AI Overview treated it as a legitimate condition. When those same users asked “Is bixonimania real?” the system would sometimes correctly identify it as fictional. Two different framings of the same core question produced opposite answers. In a research context, that kind of response variability would disqualify a tool from any serious application. In a consumer health context, it means outcomes depend on how a frightened patient happens to phrase their question.

Perplexity AI Invented a Patient Population of 90,000

Of all the AI responses documented in this experiment, Perplexity AI’s stands out as the most scientifically egregious. The system didn’t just repeat false information that had been seeded into its retrieval pipeline — it generated a specific epidemiological figure, stating that approximately 90,000 patients were affected by bixonimania. That number came from nowhere. There were no fake papers containing that statistic. Perplexity constructed it.

This is what researchers refer to as hallucination in the context of large language models — the generation of confident, specific, false information that has no grounding in any source, real or fabricated. When hallucination occurs in a general knowledge context, it is a product quality problem. When it occurs in a medical context, and produces false patient population figures, it becomes a research integrity problem.

False epidemiological data has real consequences. Fabricated prevalence figures can influence funding allocation, clinical trial design, public health resource planning, and policy decisions. A number like “90,000 patients” embedded in an AI-generated report that gets cited downstream — even once — can corrupt a legitimate research thread in ways that take years to untangle.

The Hallucination Spectrum: How AI Medical Errors Differ in Severity

Error Type Description Example From Bixonimania Experiment Risk Level Retrieval Error AI repeats false information sourced from planted fake content Describing bixonimania symptoms from fabricated papers High Pattern Fabrication AI applies legitimate medical language templates to false content Copilot calling bixonimania “rare but real” Very High Clinical Hallucination AI generates specific false clinical data with no source basis Perplexity inventing a 90,000-patient population Critical Actionable Misguidance AI issues medical recommendations based on false conditions Gemini advising ophthalmologist consultation Critical

The Real-World Damage That Followed

The bixonimania experiment didn’t just expose a theoretical vulnerability. It produced documented real-world consequences that extended beyond the AI chatbot responses themselves. The most significant of these was the contamination of legitimate academic publishing — the very infrastructure that science depends on for reliable knowledge transfer. In some cases, the repercussions were similar to those seen in medical imaging services investigations where data integrity was compromised.

When fabricated information moves from AI outputs into cited academic work, the problem compounds exponentially. Each citation creates a new node of false legitimacy. Each paper that cites a hallucinated fact gives the next paper a seemingly credible reference to build on. The research community is still developing the tools and protocols needed to detect and contain this kind of citation contamination before it spreads.

A Peer-Reviewed Journal Published a Paper Citing the Hoax

Perhaps the most alarming downstream consequence of the bixonimania experiment was that a peer-reviewed journal published a paper that cited the fictional disease as though it were legitimate. This wasn’t an AI output — this was a human-authored academic paper that passed through editorial and peer review processes and still carried a reference to a fabricated condition. The fake papers seeded by the experiment had successfully migrated from the open web into formal academic literature.

This finding should serve as a direct call to action for journal editors, peer reviewers, and research institutions. The assumption that peer review acts as a reliable filter against AI-generated or AI-amplified misinformation is no longer safe to make. As AI tools become more integrated into literature review and manuscript preparation workflows, the sources those tools retrieve and present as credible can and do contain fabricated content — and human reviewers are not consistently catching it. This is similar to how AI’s role in strengthening cyber compliance is being examined in other sectors.

Why AI Medical Misinformation Hits Harder Than Standard Misinformation

Medical misinformation has always been dangerous, but AI-delivered medical misinformation operates on a different scale and with a different psychological profile. Traditional misinformation — a blog post, a social media claim, a misleading news headline — typically comes with visible markers of source quality that allow skeptical readers to apply critical judgment. AI responses carry none of those markers. They arrive in clean, authoritative prose, with no byline, no obvious agenda, and no indication of the quality of sources behind the answer.

Research into health information-seeking behavior consistently shows that patients assign high credibility to AI-generated responses precisely because they don’t look like opinions — they look like facts. When an AI system describes a fake disease with the same tone and structure it uses to describe Type 2 diabetes or hypertension, most users have no mechanism for distinguishing between the two. That asymmetry between AI confidence and AI accuracy is the core of what makes this experiment’s findings so consequential for both public health and scientific communication.

Why AI Systems Fail at Medical Fact-Checking

The bixonimania experiment isn’t an anomaly — it’s a window into structural limitations that are present across all current large language model architectures. Understanding why these systems fail at medical fact-checking requires looking at both how they are built and how they behave when confronted with low-quality or fabricated source material.

How LLMs Absorb Preprint Content Without Verification

Large language models are trained on vast datasets that frequently include preprint repositories such as bioRxiv, medRxiv, and various open-access academic platforms. These repositories serve a legitimate and valuable function in science — they allow rapid dissemination of research findings before formal peer review. But they also contain papers that have never been vetted, retracted work that hasn’t been consistently flagged, and — as the bixonimania experiment demonstrated — deliberately fabricated content. LLMs do not apply differential weighting to preprint versus peer-reviewed content in any consistent, transparent way. A fake paper on an open repository and a validated study in The New England Journal of Medicine can carry equivalent weight in an AI-generated response, with no indication to the user that the sources are categorically different in reliability.

The Confidence Problem: Why AI Sounds Certain Even When It Is Wrong

Large language models are optimized to produce fluent, coherent, contextually appropriate responses — not to produce calibrated expressions of uncertainty. When an LLM encounters a query about a medical condition, it generates the most statistically probable continuation of that query based on its training data. If that training data contains fabricated information presented in the style of legitimate medical literature, the model will reproduce that information in the same confident tone it uses for verified facts. There is no internal mechanism that automatically flags low-confidence or unverified claims with appropriate hedging. The result is that AI systems sound equally certain when they are describing real, well-documented conditions and when they are elaborating on diseases that were invented last month by a researcher trying to break them.

AI and Medical Misinformation Is a Broader Crisis

The bixonimania experiment is striking, but it did not emerge in isolation. It is one data point in a growing body of evidence that AI systems pose a measurable and escalating risk to the integrity of medical information at scale. The research community, regulatory bodies, and healthcare institutions are all beginning to grapple with the same core question: how do you maintain the reliability of medical knowledge infrastructure when the most widely used information retrieval tools in the world cannot reliably distinguish between a fabricated disease and a real one?

ECRI’s 2026 Hazard Report Findings on AI Chatbots in Healthcare

ECRI 2026 Top Health Technology Hazards: AI-Related Findings

Hazard Category Specific Risk Identified Patient Safety Implication AI Chatbot Misinformation Chatbots presenting unverified medical information as fact Patients may delay or avoid appropriate care Lack of Source Transparency AI systems do not disclose quality or origin of sources Users cannot assess reliability of health guidance received Clinical Recommendation Generation AI issuing diagnosis-adjacent advice without clinical grounding Risk of inappropriate self-treatment or misdiagnosis Hallucinated Medical Data AI fabricating specific clinical figures such as patient populations False data can enter research and policy pipelines Inconsistent Response Accuracy Same system producing opposite answers based on query phrasing Reliability cannot be assumed across user interactions

ECRI, one of the most respected independent patient safety organizations in the world, placed AI chatbot misinformation at the top of its 2026 hazard report — a document that shapes institutional risk management priorities across hospitals, health systems, and clinical research environments. That ranking was not based on hypothetical risk. It was based on documented patterns of AI systems delivering inaccurate, unverified, or entirely fabricated health information to users who had no way of knowing what they were receiving was wrong.

The bixonimania experiment provides exactly the kind of concrete case study that makes ECRI’s hazard designation impossible to dismiss. When four of the most widely deployed AI systems in the world all fail to identify an obviously fake disease — and two of them go so far as to generate actionable clinical guidance based on that fake disease — the hazard is not theoretical. It is operational and it is active right now, in the hands of tens of millions of health information seekers every day.

What ECRI’s report also highlights is the institutional readiness gap. Most healthcare organizations have not yet developed formal policies governing how AI-generated health information should be evaluated, disclosed, or corrected. Clinicians are encountering patients who arrive at appointments with AI-generated summaries of their symptoms and conditions, and there is currently no standardized framework for how those interactions should be handled. The bixonimania findings add urgency to closing that gap.

For research institutions specifically, ECRI’s 2026 findings carry a direct implication: any AI tool used to support literature review, evidence synthesis, or clinical guideline development needs to be treated as a source that requires independent verification, not as a reliable retrieval system. The assumption of AI accuracy in a research workflow is now a documented patient safety risk, not just a theoretical concern about technology limitations.

40 Million People Use ChatGPT for Health Information

Forty million people using a single AI platform for health information is not a niche phenomenon — it is a public health infrastructure reality. When even a small percentage of those interactions involve queries similar to the ones that produced false bixonimania responses, the volume of people receiving inaccurate medical information becomes staggering. The bixonimania experiment makes clear that this is not a low-probability edge case. It is a predictable output of systems that cannot reliably distinguish fabricated medical content from verified clinical knowledge. At that scale, the difference between an AI system that gets medical facts right 95% of the time and one that gets them right 99% of the time is still millions of potentially harmful interactions per year.

What Researchers and Medical Institutions Must Do Now

The bixonimania experiment is not an argument against AI in medicine — it is an argument for rigorous, structured, and institutionally enforced standards for how AI tools are used in medical and research contexts. The path forward requires action at multiple levels simultaneously. Preprint repositories need strengthened submission screening protocols that can flag content with anomalous citation networks or implausible medical terminology. AI developers need to implement source quality differentiation, where peer-reviewed literature carries demonstrably higher weight than unvetted preprint content, and where uncertainty is communicated transparently rather than papered over with confident prose. Journal editors and peer reviewers need explicit training and tooling to detect AI-assisted manuscript preparation that may have introduced hallucinated citations or fabricated supporting data. And healthcare institutions need formal AI literacy frameworks for both clinicians and patients — not to discourage AI use, but to establish clear expectations about what AI outputs require before they can be acted upon.

The researchers who designed the bixonimania experiment gave the scientific community something genuinely valuable: a documented, reproducible proof of concept that AI medical misinformation is not a future risk but a present one. The question now is whether the institutions, developers, and professional bodies with the authority to respond will treat this evidence with the same urgency they would apply to any other confirmed patient safety hazard. The window for establishing responsible norms around AI in healthcare is still open — but the bixonimania findings make clear it will not stay open indefinitely.

Frequently Asked Questions

The bixonimania experiment generated significant attention across both the scientific and general public communities, raising questions that go beyond the specific findings of the study. Understanding the mechanics of what happened — and what it means going forward — is essential for scientists, clinicians, and anyone who uses AI tools to access health information.

Below are the most commonly asked questions about the experiment, addressed with the specificity the topic demands. These answers draw directly from what the research documented, without extrapolating beyond what the experiment itself established.

If you use AI tools in any professional or personal health information context, these answers are directly relevant to how you should evaluate and act on what those tools tell you. For instance, understanding AI-driven trends can be crucial in making informed decisions.

What Is Bixonimania?

Bixonimania is a completely fabricated eye condition that does not exist in any legitimate medical literature. It was invented by a Swedish researcher as the centerpiece of an experiment designed to test whether major AI chatbots could be tricked into presenting false medical information as real. The name was deliberately chosen to be implausible as a medical term — it carries none of the Latin or Greek root structure consistent with established disease nomenclature.

The only academic papers ever written about bixonimania were created as part of the experiment itself, loaded with intentional red flags including nonsensical author affiliations and circular citations between fake papers. Despite these obvious markers of illegitimacy, multiple major AI systems described bixonimania as a real condition, complete with symptoms, affected populations, and in at least one case, a recommendation to seek specialist care.

Which AI Systems Were Tested in the Bixonimania Experiment?

The experiment tested four major AI platforms: ChatGPT by OpenAI, Google Gemini, Microsoft Copilot, and Perplexity AI. All four systems failed to correctly and consistently identify bixonimania as a fictional condition. Each system demonstrated a distinct failure pattern — from Copilot’s use of legitimizing clinical language, to Gemini’s ophthalmologist referral advice, to Perplexity’s generation of a fabricated 90,000-patient population figure that had no basis in any source, real or fake.

Who Conducted the Fake Disease AI Experiment?

The experiment was led by a Swedish researcher motivated by scientific concern about the reliability of AI systems in medical information contexts. The research was covered extensively in Nature and reported widely across medical and science journalism outlets. The experiment was designed as a structured research effort, not a casual test — it involved creating a network of fake academic papers, distributing them through publicly accessible online repositories, and then systematically querying AI systems to document their responses.

The researcher’s intent was to provide the scientific community with empirical evidence of a vulnerability that had been discussed theoretically but not yet demonstrated at this scale and specificity. The experiment succeeded in that goal, producing documented failures across all four tested platforms and generating a downstream consequence that no one had anticipated: a peer-reviewed journal paper that cited the fictional disease as legitimate.

Are the Fake Bixonimania Papers Still Available Online?

The current status of the fake bixonimania papers in online repositories was not definitively resolved in the reporting available at the time of this article’s publication. What the experiment established is that the papers were accessible long enough for all four tested AI systems to index and repeat their content — and long enough for at least one peer-reviewed journal paper to cite the fabricated disease as a legitimate condition.

This timeline matters because it illustrates how quickly false medical content can propagate through the AI knowledge pipeline. The barrier to entry was low, the distribution method was simple, and the impact was measurable within the timeframe of the experiment. Whether the specific papers remain accessible is less important than what their existence revealed about the structural vulnerability they exploited.

How Can Patients Verify Medical Information They Find Through AI?

The most reliable approach is to treat any AI-generated health information as a starting point for further verification, not as a final answer. For any medical condition, symptom, or treatment that an AI system describes, cross-referencing against established medical databases — such as the National Institutes of Health’s MedlinePlus, the Centers for Disease Control and Prevention resources, or peer-reviewed clinical guidelines from relevant specialty organizations — provides a meaningful check against AI-generated misinformation.

A practical red flag to watch for is specificity without sourcing. When an AI system produces a precise figure — a patient population count, a specific percentage, an exact treatment timeline — and cannot point to a verifiable published source for that figure, the specificity itself should prompt skepticism. Perplexity AI’s invented 90,000-patient population for bixonimania is the clearest example of why specific numbers in AI health responses deserve scrutiny rather than automatic credibility.

For scientists and clinicians, the standard needs to be higher still. AI tools used in any step of a research or clinical workflow — literature review, evidence synthesis, patient education material development — should be treated as outputs that require independent source verification before they are acted upon or cited. The bixonimania experiment demonstrated that the presence of academic-style formatting and confident prose in an AI response is not evidence of accuracy. It is evidence that the system has learned what accuracy looks like — which is not the same thing at all.

Nurse.org continues to track how AI tools are reshaping the healthcare information landscape — serving as an essential resource for nursing professionals navigating these rapid and complex technological changes.